How it works

It's simple, in a way..

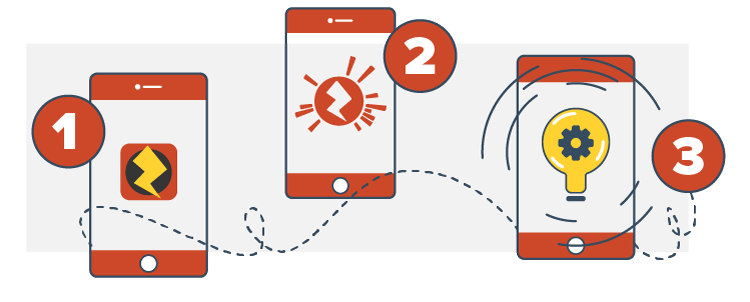

As a user the process is simple – you download the free Zappar app which opens up a camera view in "scanning" mode. Once the app detects something that's Zappar Powered it assembles all the content and brings that thing to life on-screen in front of your very eyes.

As for the phone, there's a lot going on behind the scenes! We'll save you (and us) from the 100+ pages of technical documentation underlying the algorithm but here's a potted outline of what's happening.

30 times a second the app reads an image from the camera and searches it for little distinctive corners and shapes. Once it's found a good number of them it compares them with a database of corners and shapes that it knows to be looking for – the distinctive features of the "target" image that it's going to bring to life.

Matching features let the app know not only that it's found the target, but also where that target is in the camera image. Armed with this knowledge the app places the 3D experience in that place. Huzza!

What is a zapcode and why does it matter?

The problem a lot of other AR systems face is the issue of having to detect thousands of different target images at the same time. It's easy to understand why this is hard when you consider that a lot of images look very similar. Our solution overcomes these issues with ease.

Think of a zapcode as a QR code on steroids, using devices as a gateway to multi-media content.

This little icon lets the user know there's AR content available. Surrounding the zapcode (bolt) is a special arrangement of bars called "bits". These tell the app which piece of AR content to download and augment on the image. It doesn't matter if the image looks similar to others in the system – since it's the code (rather than the image) that identifies the content, you're always going to get the right experience.